Nodes in a hashgraph network apply a 2/3 threshold in the virtual voting procedure – as soon as more than this fraction of consistent votes (or vote stake) are counted on the fame of a particular witness, then that election is complete. And once a set of famous witnesses are decided for a given round, then events that are ancestors of those witnesses reach consensus and the consensus timestamp and consensus order for each of those events can be calculated with complete and unequivocal certainty.

This type of threshold (and associated finality of consensus) is typical of Byzantine Fault Tolerant (BFT) consensus algorithms, in contrast to Proof of Work-based systems where, instead of finality, participants only gradually build up confidence that a transaction won’t be rolled back – this confidence coming through successive blocks being added to the chain.

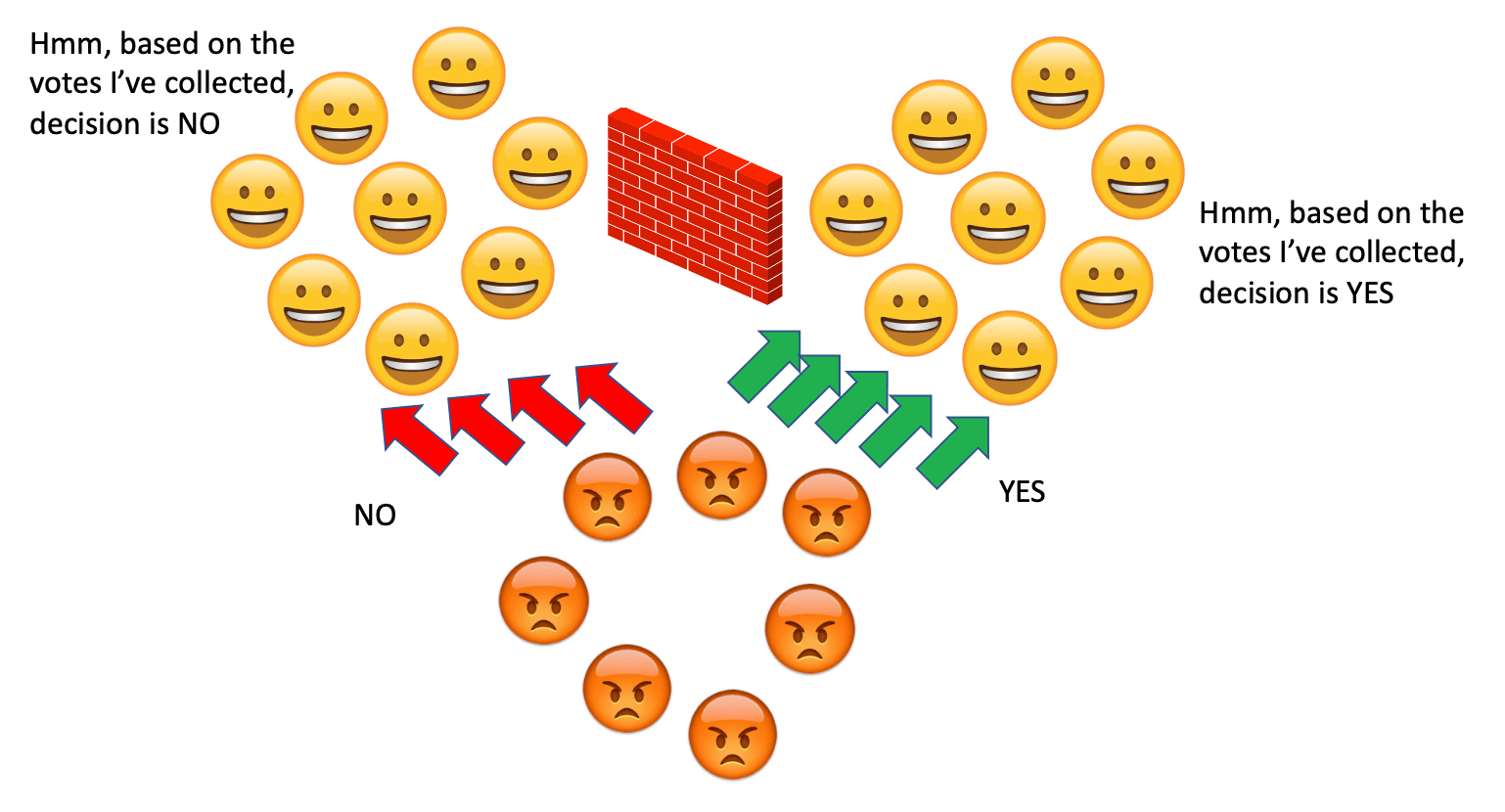

In a voting-based consensus algorithm, the general pattern is that the nodes will cast, collect, and count votes on some, typically binary, proposition. Each individual node, as it collects and counts the votes of other nodes, has to know when to stop collecting and make a decision on the election.

It would be bad idea to have the nodes only stop collecting after receiving votes from every other node, as just a single node going offline would cause consensus to halt – we will come back to this idea. It would also be a bad idea to have nodes stop collecting votes after receiving only a single vote from some other node – it would be hard to interpret that as consensus.

So instead, each node will stop collecting votes once the votes they have collected have met some threshold criteria.

A hashgraph node collects (remember that the votes are virtual, no explicit ballots are being cast) the votes on the YES/NO proposition on the fame of a particular witness event in the hashgraph. When more than 2/3 of the network have voted as YES (or as many have voted NO) on the fame of a particular witness , that particular election is over and decided accordingly. Nodes then move on to the next election, fully confident that the other nodes, independently following the same procedure, will come to the same results.

So why 2/3 and not some other fraction? Couldn’t the election stop at just over 1/2? Or wouldn’t security be enhanced if the threshold were higher, for instance, 9/10?

It turns out that requiring nodes count just over 2/3 votes is the best compromise between defending against two different types of attacks.

Safety and Liveness

There are two broad classes of faults that a consensus algorithm must protect against – safety faults and liveness faults.

Safety is a guarantee than “nothing bad will happen, ever”. In the context of consensus, safety refers to different parts of the community being able to avoid establishing different consensus values. If an attacker is able to convince some honest nodes that the consensus is A and a different group of honest nodes that the consensus is B, then that is a safety fault. A double spend is the archetypical safety attack – the attacker creates two different realities and spends the same coin in each.

Liveness is a guarantee that “something good will happen, eventually”. In the context of consensus, liveness refers to the community being able to progress towards consensus. If an attacker is able to stop consensus from being established, then it is a liveness fault. In leader-based systems, a Distributed Denial of Service (DDoS) attack against the current leader could create a liveness fault.

Generally, defending against a safety attack makes a liveness attack easier. And vice versa.

Returning to voting, we can better defend against safety attacks by raising the vote threshold, that is, requiring nodes count more votes before deciding on an election. But raising the threshold makes a liveness attack easier because an attacker will have less difficulty stopping consensus – they need to prevent fewer nodes from participating. As an example, if the threshold were 9/10, then an attacker need only prevent 1 node (assuming there were 10 nodes in the network) from casting votes in order to stop the remaining nodes from reaching the threshold. And perhaps the attacker is one of the nodes itself and need do nothing more than turning off to remove one vote.

On the other hand, if we wish to defend against a liveness attack by lowering the vote threshold, then we make safety attacks easier. If we collect fewer votes before deciding an election, then we effectively give any dishonest nodes greater relative influence to sway the election.

So there is a tension between defending against safety and liveness attacks.

But we still haven’t answered the question of why hashgraph elections are decided after 2/3 of votes are collected.

We can justify the choice by considering a hypothetical network and determining what attacks might be possible for different vote threshold values. For each threshold value, we can consider what attacks are possible and how many dishonest nodes would be required to make those attacks feasible. In general, we want to make attacks more difficult by requiring that the attacker be able to control or influence more nodes – ideally, an attacker will require a very high fraction of the network to be dishonest.

Let’s start by assuming nodes are required to talk to more than 59% (so 60% would be sufficient) of the other nodes and collect their votes before deciding that some election is over. It’s clear that, for this threshold value, if an attacker can prevent 41% of the network from communicating, then they can stop the honest nodes from being able to reach consensus. That is, they can prevent liveness. If the attacker actually controls 41% of the nodes, then they need do nothing more than turn off their computers. If they don’t control the nodes, then an attacker can try to DDoS the honest nodes and prevent their participation in consensus in that way.

What safety attack is possible with this 59% threshold? And how many dishonest nodes are needed to pull it off?

A safety attack will have some number of dishonest nodes convince different portions of the remaining honest nodes of different consensus values, for instance, convince one half of the honest nodes that the consensus is YES, whilst the other half believe the consensus is NO. Necessarily, the dishonest nodes will vote YES when communicating with some honest nodes, and NO when communicating with the other honest nodes. There has to be enough dishonest nodes lying in this manner to allow the two groups of honest nodes to reach the required 60% . If not, then the honest nodes won’t believe the election is over. And, of course, the two groups of honest nodes can’t be allowed to talk between themselves or the attack would be apparent.

The situation is represented below:

If ‘h’ is the fraction of honest nodes and ‘d’ the dishonest, and we assume that the honest nodes are divided equally, then we must have:

h/2 + d = 0.6

If the two sets of honest nodes are to be convinced of the dishonest node’s lies

as h + d = 1

then:

(1-d)/2 + d = 0.6

1/2 – d/2 + d = 0.6

1/2 + d/2 = 0.6

d/2 = 0.1

d= 0.2

If there are 20% dishonest nodes, then a safety attack is possible with the choice of 59% for threshold, if the honest nodes can be partitioned as shown. The dishonest 20% lie to each honest 40% camp, allowing each 40% to reach the requisite 60% and be convinced the election is over. And, of course, each 40% believes the result of the election is different – a fork.

While the choice of 59% for the threshold provides attractive resistance to liveness attacks (taking 40% of the network offline seems a high bar) it makes a safety attack relatively easy (needing only 20% of nodes to lie).

We can repeat the exercise for other choices of the vote threshold. For instance, for a threshold of 79%, a liveness attack is possible by only 21% dishonest nodes, a safety attack possible if there were 60% dishonest nodes.

The 79% choice makes a liveness attack easier, but significantly raises the bar for a safety attack.

Thus, the higher the threshold we choose, the easier a liveness attack, but the harder a safety attack.

What is the situation if we choose the threshold of 2/3 and require nodes collect just over this, for instance 67%? It turns out for this particular threshold then both that a liveness and safety attacks are possible with 1/3 dishonest nodes.

It is for the 2/3 value of a vote threshold that we make safety and liveness attacks equally difficult, specifically that the attacker must control or be able to take offline 1/3 of the network.

This is the optimal value if we want to guard against both safety and liveness attacks. If we were concerned only with one or the other, then we would choose a different threshold value.

Honest nodes counting just over 2/3 of the votes is the best compromise.

It is for this reason that hashgraph nodes count just over 2/3 of virtual votes when determining the fame of witnesses (and so determining which timestamps are used in calculating a median timestamp for events).

Choosing the threshold 2/3 is the best you can do. And even that best case allows both safety and liveness attacks if there are 1/3 dishonest nodes.

Not all consensus algorithms can achieve this best case; that is, to provide both safety and liveness even when confronted with just less than 1/3 dishonest nodes.

The Swirlds’ whitepaper includes a proof that shows that, as long as less than 1/3 of the network are dishonest, hashgraph is Byzantine Fault Tolerant (BFT). Additionally, because the hashgraph algorithm does not make any assumptions about how long messages take to cross the network (as long as they eventually do), the protocol is completely asynchronous (ABFT).

Asynchronous BFT is truly the best a consensus algorithm can achieve – guaranteeing both safety and liveness for any amount of dishonest nodes up to 1/3 of the network.